31 July 2020: Review Paper

Applicability of Augmented Reality in an Organ Transplantation

Maciej Kosieradzki1ADEF, Wojciech Lisik1ACEF, Radosław Gierwiało2B*, Robert Sitnik2GDOI: 10.12659/AOT.923597

Ann Transplant 2020; 25:e923597

Abstract

ABSTRACT: Augmented reality (AR) delivers virtual information or some of its elements to the real world. This technology, which has been used primarily for entertainment and military applications, has vigorously entered medicine, especially in radiology and surgery, yet has never been used in organ transplantation. AR could be useful in training transplant surgeons, promoting organ donations, graft retrieval and allocation, and microscopic diagnosis of rejection, treatment of complications, and post-transplantation neoplasms. The availability of AR display tools such as Smartphone screens and head-mounted goggles, accessibility of software for automated image segmentation and 3-dimensional reconstruction, and algorithms allowing registration, make augmented reality an attractive tool for surgery including transplantation. The shortage of hospital IT specialists and insufficient investments from medical equipment manufacturers into the development of AR technology remain the most significant obstacles in its broader application.

Keywords: Education, Organ Transplantation, Surgery, Computer-Assisted, augmented reality

Background

Augmented reality (AR) and virtual reality (VR) are fast-developing technologies that will revolutionize most of the branches of contemporary medicine, from neurosurgery to teaching. AR delivers virtual information or its elements to the real world, like in the Pokemon Go game, where, with a smartphone in your hand, you could seek out digital characters in a real neighborhood. Today more than 200 AR applications and platforms are offered for Android devices. Virtual reality (VR) is just the opposite: it immerses a user in a completely digital world shutting out the physical environment almost entirely. HTC Vive or Samsung VR Gear goggles are popular devices able to transport anyone to the virtual world. The newest technology is mixed reality, which combines both AR and VR features so that the digital and real-world objects interact. Microsoft HoloLens 2 is probably the most advanced prototype in this field; its practical applications for medicine are still under development with Philips Azurion technology for image-guided minimally invasive surgery.

AR in General Surgery

The computer systems used currently in surgery improve planning, help intraoperative navigation, and enhance postoperative control [1,2]. Utilization of diagnostic imaging in preoperative planning allows 3-dimensional (3D) visualization of the anatomical structures, including blood vessels and tumors, and designing planes and axes. In the most advanced imaging systems, the development of the digital avatars for the specific surgical instruments will allow predicting their usefulness in the given operating environment. Preoperative digital images must be segmented, i.e., divided into the graphically homogeneous areas representing distinct anatomical structures [3]. However, planning cannot be finished without the surgeon, who identifies important anatomical structures and overlaid graphic elements. Intraoperative navigation usually transports preoperative planning results into the operating room (OR). This step, however, requires automated, often real-time registration; i.e., transfer of different sets of data, for instance data coming from different diagnostic studies, into a unique coordinate system of the segmented diagnostic images, into real anatomy of the patient. Actual patient’s body video image and 3D-rendered reconstruction are synchronized using fixed markers such as the umbilicus, easy palpable iliac spine, or the costal edge [4]. A significant obstacle in the registration of preoperative imaging is the movement and deformation of soft organs resulting from the surgical access, respiration, heartbeat, surgical manipulation, and separation of tissues [5,6]. Construction of the biomechanical models of tissue deformations or repeated intraoperative imaging can solve this problem. Registration of 2 different images may bring a systematic error, though registration accuracy today is very high, with an average error lesser than 1 mm [7].

The image is usually projected on the monitor screen, and the actual operating field is not always visible. An operator continually has to change eye focus, from the screen to the operating field and back, and use their own spatial imagination to connect both pictures. A viewpoint of the computer-generated images is usually static and differs from that of an operator. Change of viewpoint of the projected images requires third-party assistance or an interface located outside an operating field. Dynamic adjustment of the viewpoint direction is a very desirable feature of the final system design. These limitations are particularly important during intraoperative 2D diagnostics (classical x-ray radiology and ultrasonography), which requires extremely accomplished spatial orientation and eye-hand coordination. This takes a lot of training and the learning curve is long. When real-time registration problems can be resolved, the digital, 3D, real-looking image of the operating field and anatomy will be available with tracking movements of the surgical instruments. Another problem to be resolved during design of the new solutions for AR is the need for fast discovery of the errors such as an incorrect registration and wrong tool tracking. This is very difficult when the physical operating field is not visible to a surgeon. Finally, the time it takes to complete the registration and mathematical conversions and to navigate correctly is also important. They should not significantly delay the projection of AR (the changes of the view angle and the alignment of the instruments should be registered in as short a time as possible). The time required for the procedure to be performed, usually under general anesthesia, must be as short as possible.

Current status of AR

Contemporary systems for AR can be grouped into 5 major categories [8].

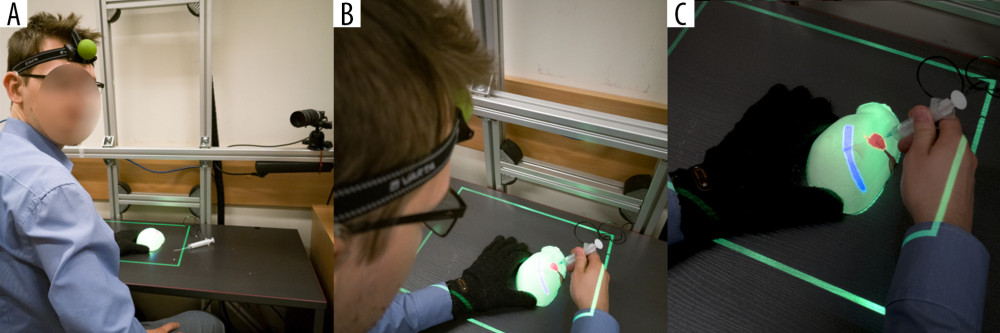

The authors of this manuscript have developed another experimental AR system, MARVIS (Medical AR VISualizer) [25], which does not fit into any of the aforementioned categories. We used spatial recognition of the phantom surface and the operator’s head position in real time. The monoscopic projector hanging over an operating field displays the CT-acquired AR image of the target lesion hidden inside the phantom on its uneven surface, as seen from the operator’s point of view. Head movement changes the view angle and shape of the displayed image, enabling an operator to place the needle precisely in the hidden target (Figure 1) [25].

AR in Transplantology

So far, there are no notions on the utilization of AR in organ transplantation. We are certain, however, that with the development of applications and popularization of AR outside the field of medicine, both mixed reality and AR will become important tools in organ transplantation. Although it has never been tried, AR could be used to promote organ donation. In 2016, the British National Health Service (NHS) launched a campaign encouraging blood donation. In short, when you put a sticker on your arm and scanned it to an iPhone or Android application you could actually see a needle and a tube put into your arm on the screen of your smartphone. If you held it long enough, an outdoor digital screen would display a bag of blood being filled and the condition of a real transfusion recipient improving. Finally, the outdoor screen displays thanks and your first name [26]. It is difficult to say how many additional blood donations this project was responsible for, but the campaign won an annual competition run by the Ocean Outdoor and the

In addition, having scanned the donor and recipient at a certain stage of preparation for transplantation, a physician could align them in a unique reconstruction (registration) in a virtual or AR, and decide whether the donor’s liver, heart, or lung size match and would fit in the recipient’s body.

Virtual Surgical Training

The Cleveland Clinic, Ohio, USA in cooperation with Microsoft, construct a future health education facility with the core of their program the HoloLens generated AR. They provide students with a 21st-century holographic tool for learning gross anatomy, histology, embryology, radiology, and pathology. Thus, a teacher need not to be present in person in the class to assure effective learning [30]. The same technology or VR could be used to teach transplantation specialists to correctly assess an organ to be retrieved, take a biopsy sample using ultrasound or CT, interpret biopsy results, and perform laparoscopic or robotic organ retrieval and transplantation. Interactive teaching programs in AR or VR will eventually be a substitute for the courses requiring personal attendance offered currently by the transplantation societies. All educational UNOS Connect and ESOT programs like Hesperis, Academia, and LIDO (Living Donor Nephrectomy) hands-on courses could be organized in an AR environment with improved cost and time efficacy. One significant drawback will be the lack of personal contact, which builds team spirit, trust, and the desire to improve. Teaching transplantation surgery requires more experienced surgeon tutorship provided one on one to a pupil. The learning curve is long, and the teacher must be present in person during organ retrieval and transplantation. Expert surgeons are few and not likely to expand in number. With a video camera and AR goggles, an inexperienced surgeon could be instructed to identify vital anatomical structures, interpret the color and structure of the liver and pancreas, assess heart contractility, and the condition of the lungs. Steps of the retrieval and transplantation procedure could be followed and controlled from a distance, and even a small time-lag, which is an obstacle in the long-distance robotic surgery, would not be a limiting issue here. Similarly, a trainee could be instructed by an off-theatre mentor when performing transplantation. With a video camera mounted on the goggles used by the operator, an instructor could follow the whole procedure and indicate the vital structures or moves, displaying his remarks with AR directly on the operating field.

AR in the Assessment of Anatomical and Pathological Structures

As transplantation patients today have long life expectancy even while exposed to the immunosuppressive drugs, they suffer from the same diseases as the general age-matched population, with similar or increased morbidity. Non-Hodgkin lymphoma is 7 times, lung cancer 2 times, liver cancer 11 times, and kidney cancer 7 times more frequent in transplant recipients [31,32]. Kidney tumors occur both in native and transplanted kidneys and nephron-sparing surgery could be a reasonable therapeutic option. For example, Dutch researchers reported utilization of HoloLens visualized AR in planning surgery in 7 pediatric patients with Wilms tumor. They used commercially available software for image segmentation, 3D reconstruction, repair of minor artifacts, and creation of a proper application. The result was precise tumor visualization in relation to the vital anatomical structures including blood vessels and urinary collecting structures. Surgeons assessed AR holographic visualization as more informative and that they received a more detailed understanding of the kidney anatomy [33]. Such technology can be very useful in the planning of kidney retrieval from living donors, where a perfect assessment of kidney 3D anatomy, and especially vasculature, is key to the success of transplantation and donor safety. Approximately 9.4% of liver transplantation candidates in the USA need transplantation for hepatocellular carcinoma [7,34]. Almost 6% of these patients never make it to transplantation because of disease progression, hence, there is an urgent need for alternative or bridging therapies during enlistment procedures and while on the waiting list. Of the options available (chemotherapy, radiotherapy, chemo(radio)embolization, ablation, resection), surgical resection gives the best results in terms of long-term patient survival, with thermal ablation the second best. All these methods are also used to downstage tumor burden, and to transplant initially untransplantable patients, with acceptable results [35]. Even in low-risk patients, the tumor will recur in 15% to 20% of the transplanted livers [36], requiring intensive treatment. Besides, a good proportion of kidney transplant recipients have a history of hepatitis C virus or hepatitis B virus infection and are at a high risk of developing primary liver cancer. As recipients have routine Doppler ultrasound at least once a year, they are likely to be diagnosed in the early stage of the disease. Due to the position of the liver covered by the chest wall, its anatomical complexity and diversity, aiming at a tumor for biopsy to confirm the diagnosis, and to treat it with ablation, is relatively complicated. Resections, particularly made using the laparoscopic technique, require a lot of surgical expertise, and have a long learning curve. Application of AR with information on the anatomical location of the large vessels and the biliary tree could shorten the learning curve, improve patient safety and accessibility to treatment while enhancing effectiveness at the same time. Implementation of AR in liver surgery can help design the best access incision line and laparoscopic (or robotic) instrument ports placement, navigate the track and aim percutaneous biopsy, position the ablation electrodes or high-dose radiotherapy catheters, and orient tumor, large vessels, and bile ducts within the liver to preserve safe resection margin and avoid collision with the vital anatomical structures [4,17,37]. The University of Bonn has proposed a HoloLens and Moverio BT300 based system to navigate radiofrequency ablation needles into a liver tumor. The most difficult issue of target movement with respiration and liver deformation was dealt with in real time with the statistical model adjusting internal target position to the movement of the external markers from 4D-CT scans, aligned to the video image in a living animal. Using traditional CT-guided navigation, after 3 position adjustments, the needle was located 2.6 mm from the center of the lesion; AR navigation required only 1 adjustment to achieve similar accuracy [38]. Most researchers accept an error of 5 mm in locating the liver tumor. Of course, such real-time computations require fancy mathematical algorithms and a lot of computational power, and currently are inherently associated with a time lag between the actual and observed environment as the systems are available only to generate 4 frames of moving image per second [39].

Having the problem of respiratory movements of the target solved, the AR navigation could be applied to the biopsy of chest tumors in solid organ transplant recipients as well. In fact, the Navigation Technology team developed the image-guided video-assisted thoracoscopic surgery (iVATS) system, which uses an AR navigation system to remove early-stage lung tumors with minimally invasive surgery [40]. They performed a preliminary clinical trial in 23 patients with tumors 6 to 18 mm in diameter, and they achieved complete removal of the tumors with safe margins, and minimal post-operative complications. While the system was not very complicated, with the C-arm and fiducials to align CT scans to video image, the surgeon had to translate the 3D reconstructed CT and x-ray images with fiducials into real life [40]. As lung deflation is a major obstacle in following the lesion, a system to mark the landmarks in AR with a tooltip and continuous tissue tracking, is currently under development by Phillips [41]. Proper setup of future OR equipped with cyber-infrastructure allows displaying 3D lung dynamics from the deformable models on the surface of the patient’s body [42]. Emergency patients with pneumothorax, hydrothorax or, lung surgery patients, and transplantation patients could benefit from such AR-derived additional information. Lung cancer occurs more often in lung transplantation patients, and the PTLD (post-transplant lymphoproliferative disorder) derived lung tumors, affect almost 5% of patients, result in early mortality [43]. Breast cancer in transplantation patients has a similar occurrence to that of the general population but the results of treatment are much inferior [44]. Quality of Life Technology Labs designed an AR application that uses 3D mammography, breast tumor visualization, which renders its model on the patient’s body, using a head-mounted device and Android smartphone to facilitate screening and planning for breast surgery [45].

AR in the Treatment of Post-Transplant Pathologies

Colorectal cancers in solid organ transplant recipients occur only 10% more often than in the general population, yet the increase is mostly due to the proximal colon localization, which is less accessible and more difficult to diagnose early. Primary sclerosing cholangitis and cystic fibrosis patients are at significantly greater risk. Laparoscopic and robotic surgery is used more and more often for colon cancer resection. Although not quite AR, the TilePro multi-display was used for the presentation of 3D video image from an actual operating field, with the CT OsiriX-rendered 3D image of bowel anatomy next to it, in a pT4aN2b ascending colon cancer patient operated on with the DaVinci robot [46]. This technique prevented sight diversion from the operating field to the monitor, yet an operator still had to use spatial imagination to combine both images. The surgery was uneventful, and the surgeon assessed the 3D data presentation as very helpful. Similar technology was used to integrate real-time ultrasound data during robotic gynecological procedures [47].

Cardiovascular diseases are a leading cause of death in solid organ transplant recipients with a functioning graft [48]. Hence, a good proportion of these patients will require transcatheter procedures: angioplasty, stent placement, septal defect or foramen ovale closure, valve repair and other procedures, normally performed under x-ray fluoroscopy. Real-time 3De positioning was achieved in one study [49] using 2 fluoroscopic images taken from different angles registered to preoperative CT and displayed holographically with Microsoft HoloLens. Registration error was only 0.4 mm, and computational time lag was only 1.2 second, and granted better visualization to the interventionalist [49]. Polish cardiac surgeons used CT acquired images and AR to navigate during trans-catheter pacemaker placement in a patient with aberrant heart anatomy [50]. The same team reported successful revascularization of a chronically occluded coronary artery with AR visualization of the anatomy next to the operating field with the Google Glass [51].

End-stage renal disease patients transplanted for polycystic kidney disease are at increased risk of intracranial aneurysm rupture (5–9%) [49,52]. Application of AR with HoloLens device for the diagnostic studies of patients with internal carotid artery intracranial aneurysms allowed accurate blood flow and rupture risk assessment [53]. Careful planning of surgery with VR has provided excellent treatment results but needs a lot of expertise and mental transfer of the analyzed data into a real-life setting [54]. AR could simplify the process. Video images from the neurosurgical microscope can already be augmented with Doppler ultrasound and MRI data, to locate the lesion precisely [55].

AR microscope has been recently shown to improve histopathological diagnosis. Armed with a powerful artificial intelligence computer, the system displays areas of suspicion with the AR, through the eyepiece. Thanks to the high computational power, the AI is able to analyze a much higher number of fields than any pathologist in the same amount of time, and reports all the suspicious areas to an expert human for decision making [56]. Although computational algorithms are used today to help diagnose rejection in kidney, heart, and liver grafts while analyzing gene expression profile of a biopsy [57], the AR/AI-supported diagnosis platform requires less laboratory time, biostatistical analysis, and knowledge to process and understand the result.

Conclusions

Augmented reality (AR) is a state-of-the-art technology that is used primarily for entertainment and military applications. Although it slowly is being adopted in the medical field in radiology and surgery, it has never been tried in organ transplantation scenarios. However, it could be useful in the training of transplant surgeons, as well as in advertising organ donations, graft retrieval and allocation, microscopic diagnosis of rejection, treatment of complications, and post-transplant neoplasms. Availability of AR display tools like a Smartphone screen, and not too expensive head-mounted goggles, accessibility of software for automated image segmentation, 3D reconstruction, and algorithms allowing registration, make AR an attractive tool for surgery, including transplantation. A shortage of hospital IT specialists and abstaining of medical device manufacturers from investments in the development of AR technology remain the most significant obstacles to its broader application.

References

1. Najmaei N, Mostafavi K, Shahbazi S, Azizian M, Image-guided techniques in renal and hepatic interventions: Int J Med Robot, 2013; 9; 379-95

2. Peterhans M, Oliveira T, Banz V, Computer-assisted liver surgery: Clinical applications and technological trends: Crit Rev Biomed Eng, 2012; 40; 199-220

3. Oliveira DA, Feitosa RQ, Correia MM, Segmentation of liver, its vessels and lesions from CT images for surgical planning: Biomed Eng Online, 2011; 10; 30

4. Volonté F, Pugin F, Bucher P, Augmented reality and image overlay navigation with OsiriX in laparoscopic and robotic surgery: Not only a matter of fashion: J Hepatobiliary Pancreat Sci, 2011; 18; 506-9

5. Zijlmans M, Langø T, Hofstad EF, Navigated laparoscopy – liver shift and deformation due to pneumoperitoneum in an animal model: Minim Invasive Ther Allied Technol, 2012; 21; 241-48

6. Bussels B, Goethals L, Feron M, Respiration-induced movement of the upper abdominal organs: A pitfall for the three-dimensional conformal radiation treatment of pancreatic cancer: Radiother Oncol, 2003; 68; 69-74

7. Kenngott HG, Wagner M, Gondan M, Real-time image guidance in laparoscopic liver surgery: First clinical experience with a guidance system based on intraoperative CT imaging: Surg Endosc, 2014; 28; 933-40

8. Shamir R, Joskowicz L, Shoshan Y, An augmented reality guidance probe and method for image-guided surgical navigation; 600-5

9. Navab N, Mitschke M, Schütz O, Camera-augmented mobile C-arm (CAMC) Application: 3D reconstruction using a low-cost mobile C-arm: Medical Image Computing and Computer-Assisted Intervention, 1999; 688-97

10. Stetten GD, Chib VS, Tamburo RJ, Tomographic reflection to merge ultrasound images with direct vision

11. Fichtinger G, Deguet A, Masamune K, Image overlay guidance for needle insertion in CT scanner: IEEE Trans Biomed Eng, 2005; 52; 1415-24

12. Fichtinger G, Deguet A, Fischer G, Image overlay for CT-guided needle insertions: Comput Aided Surg, 2005; 10; 241-55

13. Edwards PJ, Hawkes DJ, Hill DL, Augmentation of reality using an operating microscope for otolaryngology and neurosurgical guidance: J Image Guid Surg, 1995; 1; 172-78

14. Shahidi R, Bax MR, Maurer CR, Implementation, calibration and accuracy testing of an image-enhanced endoscopy system: IEEE Trans Med Imaging, 2002; 21; 1524-35

15. Kang X, Azizian M, Wilson E, Stereoscopic augmented reality for laparoscopic surgery: Surg Endosc, 2014; 28; 2227-35

16. : Polaris Vicra System . Available from: https://www.ndigital.com/medical/products/polaris-family/systems/

17. Buchs NC, Volonte F, Pugin F, Augmented environments for the targeting of hepatic lesions during image-guided robotic liver surgery: J Surg Res, 2013; 184; 825-31

18. Nicolau S, Schmid J, Pennec X, An augmented reality & virtuality interface for a puncture guidance system: Design and validation on an abdominal phantom: Lecture Notes in Computer Science, 2004; 302-10

19. Perrodin S, Lachenmayer A, Maurer M, Percutaneous stereotactic image-guided microwave ablation for malignant liver lesions: Sci Rep, 2019; 9(1); 13836

20. Lachenmayer A, Tinguely P, Maurer MH, Stereotactic image-guided microwave ablation of hepatocellular carcinoma using a computer-assisted navigation system: Liver Int, 2019; 39; 1975-85

21. Blackwell M, Nikou C, DiGioia AM, Kanade T, An image overlay system for medical data visualization: Med Image Anal, 2000; 4; 67-72

22. Hua H, Gao C, Brown LD, Using a head-mounted projective display in interactive augmented environments; 217-23

23. Vogt S, Khamene A, Sauer F, Reality augmentation for medical procedures: System architecture, single camera marker tracking, and system evaluation: International Journal of Computer Vision, 2006; 179-90

24. Epson Moverio: Moverio BT-300– Epson [Internet] Available from: https://www.epson.pl/products/see-through-mobile-viewer/moverio-bt-300

25. Gierwiało R, Witkowski M, Kosieradzki M, Medical augmented-reality visualizer for surgical training and education in medicine: Applied Sciences, 2019; 9(13); 2732

26. Campaign: NHS encourages virtual blood donations with augmented reality outdoor ads [Internet] Available from: https://www.campaignlive.co.uk/article/nhs-encourages-virtual-blood-donations-augmented-reality-outdoor-ads/1395315

27. Nuanmeesri S, Kadmateekarun P, Poomhiran L, Augmented reality to teach human heart anatomy and blood flow: The Turkish Online Journal of Educational Technology, 2019; 18; 15-24

28. : Visualizing and diagnosing reduced blood circulation with augmented reality and deep learning. [Internet] Available from: https://www.mathworks.com/company/newsletters/articles/visualizing-and-diagnosing-reduced-blood-circulation-with-augmented-reality-and-deep-learning.html

29. Pereira N, Kufeke M, Parada L, Augmented reality microsurgical planning with a Smartphone (ARM-PS): A dissection route map in your pocket: J Plast Reconstr Aesthet Surg, 2019; 72; 759-62

30. Case Western Reserve University: Oxford Music Online, 2015

31. Engels EA, Pfeiffer RM, Fraumeni JF, Spectrum of cancer risk among US solid organ transplant recipients: JAMA, 2011; 306; 1891-901

32. Cheung CY, Lam MF, Chu KH, Malignancies after kidney transplantation: Hong Kong renal registry: Am J Transplant, 2012; 12; 3039-46

33. Wellens LM, Meulstee J, van de Ven CP, Comparison of 3-dimensional and augmented reality kidney models with conventional imaging data in the preoperative assessment of children with wilms tumors: JAMA Network Open, 2019; 2; e192633

34. Organ procurement and transplantation network: National Data – OPTN [Internet] Available from: https://optn.transplant.hrsa.gov/data/view-data-reports/national-data/#

35. Toso C, Meeberg G, Andres A, Downstaging prior to liver transplantation for hepatocellular carcinoma: Advisable but at the price of an increased risk of cancer recurrence – a retrospective study: Transpl Int, 2019; 32; 163-72

36. Filgueira NA, Hepatocellular carcinoma recurrence after liver transplantation: Risk factors, screening and clinical presentation: World J Hepatol, 2019; 11; 261-72

37. Tang R, Ma L-F, Rong Z-X, Augmented reality technology for preoperative planning and intraoperative navigation during hepatobiliary surgery: A review of current methods: Hepatobiliary Pancreat Dis Int, 2018; 17; 101-12

38. Li R, Yang T, Si W, Augmented reality guided respiratory liver tumors punctures: A preliminary feasibility study

39. Reichard D, Bodenstedt S, Suwelack S, Intraoperative on-the-fly organ-mosaicking for laparoscopic surgery: J Med Imaging (Bellingham), 2015; 2; 045001

40. Gill RR, Zheng Y, Barlow JS, Image-guided video assisted thoracoscopic surgery (iVATS) – phase I–II clinical trial: J Surg Oncol, 2015; 112; 18-25

41. Thienphrapa P, Bydlon T, Chen A, Interactive endoscopy: A next-generation, streamlined user interface for lung surgery navigation: Lecture Notes in Computer Science, 2019; 83-91

42. Hamza-Lup FG, Santhanam AP, Imielinska C, Distributed augmented reality with 3-D lung dynamics – a planning tool concept: IEEE Transactions on Information Technology in Biomedicine, 2007; 40-46

43. Kremer BE, Reshef R, Misleh JG, Post-transplant lymphoproliferative disorder after lung transplantation: a review of 35 cases: J Heart Lung Transplant, 2012; 31; 296-304

44. Wong G, Au E, Badve SV, Lim WH, Breast cancer and transplantation: Am J Transplant, 2017; 17; 2243-53

45. Ghaderi MA, Heydarzadeh M, Nourani M, Augmented reality for breast tumors visualization; 4391-94, IEEE

46. Volonté F, Pugin F, Buchs NC, Console-integrated stereoscopic OsiriX 3D volume-rendered images for da Vinci colorectal robotic surgery: Surg Innov, 2013; 20; 158-63

47. Walsh TM, Borahay MA, Fox KA, Kilic GS, Robotic-assisted, ultrasound-guided abdominal cerclage during pregnancy: Overcoming minimally invasive surgery limitations?: J Minim Invasive Gynecol, 2013; 20; 398-400

48. Munagala MR, Phancao A, Managing cardiovascular risk in the post solid organ transplant recipient: Med Clin North Am, 2016; 100; 519-33

49. Liu J, Al’Aref SJ, Singh G, An augmented reality system for image guidance of transcatheter procedures for structural heart disease: PLoS One, 2019; 14; e0219174

50. Opolski MP, Michałowska IM, Borucki BA, Augmented-reality computed tomography-guided transcatheter pacemaker implantation in dextrocardia and congenitally corrected transposition of great arteries: Cardiol J, 2018; 25; 412-13

51. Opolski MP, Debski A, Borucki BA, Feasibility and safety of augmented-reality glass for computed tomography-assisted percutaneous revascularization of coronary chronic total occlusion: A single center prospective pilot study: J Cardiovasc Comput Tomogr, 2017; 11; 489-96

52. Kuo IY, Chapman A, Intracranial aneurysms in ADPKD: Clin J Am Soc Nephrol, 2019; 1119-21

53. Karmonik C, Elias SN, Zhang JY, Augmented reality with virtual cerebral aneurysms: A feasibility study: World Neurosurg, 2018; 119; e617-22

54. Kockro RA, Killeen T, Ayyad A, Aneurysm surgery with preoperative three-dimensional planning in a virtual reality environment: Technique and outcome analysis: World Neurosurg, 2016; 96; 489-99

55. Vassallo R, Kasuya H, Lo BWY, Augmented reality guidance in cerebrovascular surgery using microscopic video enhancement: Healthc Technol Lett, 2018; 5; 158-61

56. Razavian N, Augmented reality microscopes for cancer histopathology: Nat Med, 2019; 25; 1334-36

57. Halloran PF, Potena L, Van Huyen J-PD, Building a tissue-based molecular diagnostic system in heart transplant rejection: The heart molecular microscope diagnostic (MMDx) system: J Heart Lung Transplant, 2017; 36; 119-200

In Press

Original article

Diagnostic Utility of FAR1 Methylation Levels in Hepatocellular Carcinoma Patients Undergoing Liver Transpl...Ann Transplant In Press; DOI: 10.12659/AOT.951568

Original article

Inferior Long-Term Outcome of Fatty Liver Allografts After Orthotopic Liver TransplantationAnn Transplant In Press; DOI: 10.12659/AOT.950589

Database Analysis

Identification and Validation of Liver Transplantation-Induced Acute Lung Injury Biomarkers Using a Bioinfo...Ann Transplant In Press; DOI: 10.12659/AOT.950289

Original article

Survival and Recurrence in Liver Transplant Patients With Intrahepatic Cholangiocarcinoma and Hepatocellula...Ann Transplant In Press; DOI: 10.12659/AOT.950997

Most Viewed Current Articles

24 Aug 2021 : Review article 18,372

Normothermic Machine Perfusion (NMP) of the Liver – Current Status and Future PerspectivesDOI :10.12659/AOT.931664

Ann Transplant 2021; 26:e931664

05 Apr 2022 : Original article 14,731

Impact of Statins on Hepatocellular Carcinoma Recurrence After Living-Donor Liver TransplantationDOI :10.12659/AOT.935604

Ann Transplant 2022; 27:e935604

22 Nov 2022 : Original article 14,244

Long-Term Effects of Everolimus-Facilitated Tacrolimus Reduction in Living-Donor Liver Transplant Recipient...DOI :10.12659/AOT.937988

Ann Transplant 2022; 27:e937988

29 Dec 2021 : Original article 13,752

Efficacy and Safety of Tacrolimus-Based Maintenance Regimens in De Novo Kidney Transplant Recipients: A Sys...DOI :10.12659/AOT.933588

Ann Transplant 2021; 26:e933588